What is Replicate?

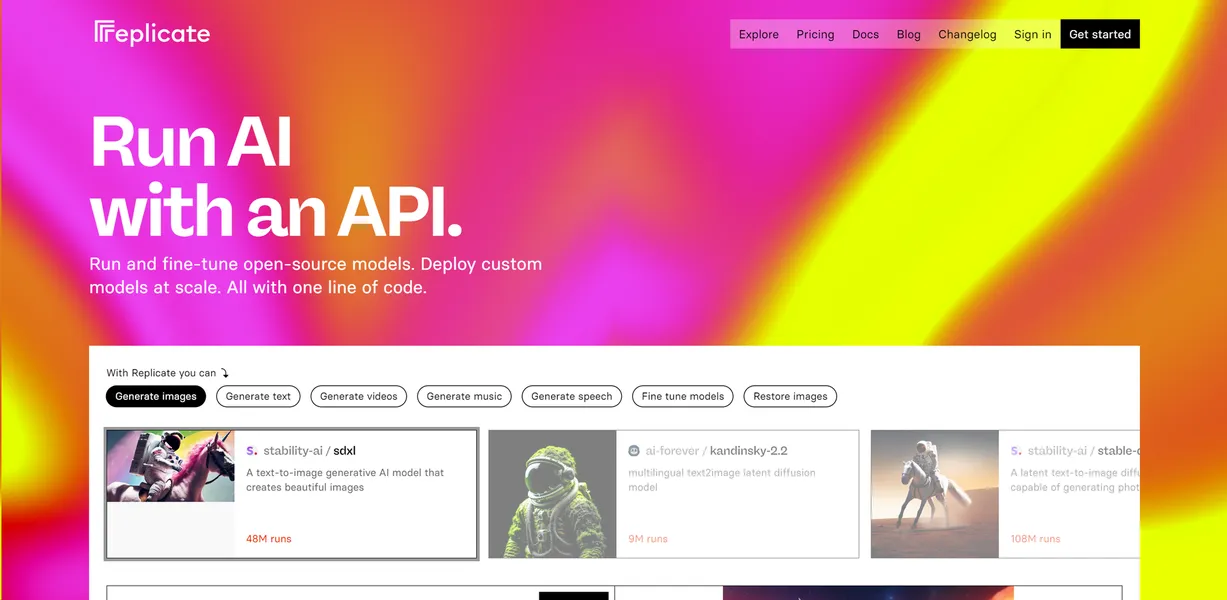

Replicate is a serverless cloud API platform that removes the need to configure Kubernetes clusters or manage GPU drivers.

Built by Replicate, Inc., this infrastructure tool solves deployment bottlenecks for software developers. You can query over 25,000 open-source machine learning models, including Llama 3 and Whisper, using standard HTTP requests. Developers use it to build custom chatbot applications or generate high-resolution images without buying expensive hardware.

- Primary Use Case: Deploying open-source machine learning models via REST API

- Ideal For: Solo developers and small teams building AI applications

- Pricing: Starts at $20 (Replit Core) : Includes $25 in monthly compute credits.

Key Features and How Replicate Works

Model Deployment and API Access

- RESTful API: Send standard HTTP requests to run models using Python, JavaScript, or Go client libraries. Limit: Requires basic programming knowledge.

- Cog Containerization: Package custom machine learning models into standard Docker containers. Limit: Opaque error messages complicate the debugging process during failed builds.

- Webhooks: Receive asynchronous notifications for long-running prediction tasks. Limit: Delivery guarantees depend on your receiving server configuration.

Hardware and Infrastructure

- Serverless Scaling: The platform automatically scales from zero to thousands of GPUs based on incoming traffic. Limit: Initial requests to inactive models take 10 to 30 seconds to boot.

- Hardware Selection: Choose specific compute instances, including T4, A10, and A100 GPUs. Limit: High-end A100 instances frequently face availability constraints during peak hours.

Customization and Testing

- Web Playground: Test model parameters and view outputs directly in your browser before writing code. Limit: Manual testing does not simulate high-volume production traffic.

- Fine-Tuning: Train custom LoRA weights on base models like Flux or SDXL. Limit: Training requires formatted datasets and consumes significant compute credits.

Replicate Pros and Cons

Pros

- Zero infrastructure management removes the need to configure Kubernetes or install GPU drivers.

- Pay-per-second billing ensures you only pay for the exact duration a model processes a request.

- Extensive documentation and SDKs allow developers to integrate models in under 10 lines of code.

- Proprietary caching layers reduce model load times compared to standard Docker deployments.

Cons

- Initial requests to inactive models take 10 to 30 seconds to boot.

- High-volume production traffic costs more than reserved instances on AWS or GCP.

- Opaque error messages make debugging custom Cog containers difficult during the build process.

Who Should Use Replicate?

- Solo Developers: You can build and launch AI applications without hiring a DevOps engineer.

- Prototyping Teams: You can test multiple models quickly using the web playground and simple API calls.

- Enterprise Production Teams: This platform is not a good fit for teams with massive, predictable traffic volumes. These users save money using dedicated AWS instances.

Replicate Pricing and Plans

Replicate uses a freemium model with tier-based subscriptions and compute credits.

The Starter plan is free and includes daily agent credits for one application. This tier offers limited intelligence and acts primarily as a testing environment. You cannot use this tier for production workloads.

The Replit Core plan costs $20 per month (billed annually) and provides $25 in monthly compute credits. It supports five collaborators and allows unlimited workspaces.

The Replit Pro plan costs $95 per month when billed annually. It includes $100 in monthly credits, supports 15 collaborators, and enables private deployments.

Enterprise plans require custom pricing. These plans add SSO/SAML integration, advanced privacy controls, and dedicated support channels.

Scaling infrastructure requires careful cost management.

How Replicate Compares to Alternatives

Similar to Hugging Face Inference Endpoints, Replicate offers access to thousands of open-source models. Hugging Face requires you to provision specific instances (which stay running even when idle) and pay by the hour. Replicate scales automatically to zero and bills by the second. This makes Replicate cheaper for applications with unpredictable traffic spikes.

Unlike Fal.ai, Replicate covers a broader range of models beyond image and video generation. Fal.ai focuses heavily on ultra-low latency inference for media models. Replicate provides better support for language processing and audio synthesis tasks.

Best For Fast Prototyping and Small Teams

Solo developers and small teams get the most value from Replicate. You can test ideas quickly without managing complex cloud infrastructure.

Teams with high-volume, predictable traffic should look elsewhere. The pay-per-second model becomes expensive at scale.

If you need cheaper compute for massive workloads, consider renting dedicated GPUs on RunPod.

The cold start latency remains the biggest hurdle for real-time applications.