What is VibeVoice-1.5B?

Are you trying to map a single actor’s emotional delivery onto multiple languages without losing the original performance? VibeVoice-1.5B answers exactly that problem. Microsoft Corporation built this 1.5 billion parameter speech-to-speech model to transfer vocal characteristics and emotional delivery from one audio clip to another. Operating within the AI Voice Generator category, it focuses entirely on expressive voice conversion. The model processes input speech and maps its pacing and tone onto a separate target voice.

Think of it like making a pan sauce: the original emotional recording is the roasted flavor left in the pan, and the target voice is the liquid you add. They fuse into a single consistent base that you can apply to different localization tasks. Developers deploying this open-weight model via Hugging Face typically use it for video game localization or prototyping character voice skins.

- Primary Use Case: Converting neutral voice recordings into expressive emotional performances across multiple languages.

- Ideal For: Machine learning engineers and localization teams with access to heavy GPU compute.

- Pricing: Starts at $0 (Open-Weights). Compute costs depend entirely on your hosting infrastructure.

Key Features and How VibeVoice-1.5B Works

Voice Cloning and Emotional Control

- Zero-shot Voice Cloning: The model replicates a target voice using a reference clip as short as three seconds. This strict data efficiency minimizes the time needed to build custom acoustic profiles.

- Vibe-based Control: Developers can manipulate the latent space to adjust the emotional output. The real issue: dialing in the exact emotion requires precise programmatic tuning through the API.

- Contextual Prosody: The system models natural speech patterns. It maintains the original speaker’s pitch and rhythm even when mapping the audio to a completely different language.

Architecture and Performance

- 1.5 Billion Parameters: Microsoft designed a large-scale transformer architecture for this model. The high parameter count handles complex linguistic nuances accurately.

- Cross-lingual Synthesis: You can convert speech between languages while preserving the original speaker’s timbre. The difference here: it prioritizes the acoustic delivery over simple text-to-speech translation.

- Real-time Inference: The architecture supports low-latency performance. Except, you need enterprise-grade hardware like NVIDIA A100 or H100 GPUs to achieve these speeds.

Deployment and Integration

- Hugging Face Integration: The model works directly with standard PyTorch Python libraries. Teams can integrate it into existing audio processing pipelines immediately.

- 24kHz Audio Output: VibeVoice-1.5B generates high-fidelity audio. This sample rate meets the minimum requirements for professional media production and game assets.

VibeVoice-1.5B Pros and Cons

Strengths

- The model delivers exceptional emotional accuracy that outperforms traditional text-to-speech systems in expressive audio tasks.

- Zero-shot cloning requires only three seconds of audio. This allows for rapid prototyping of custom voice skins.

- The architecture handles complex linguistic nuances in multi-lingual environments without losing the base speaker identity.

- Microsoft’s research infrastructure backs the model weights. The neural audio codec ensures highly efficient processing of discrete audio tokens.

Limitations

- Local deployment requires massive VRAM resources. Production environments need at least 16GB to 24GB of VRAM for stable inference.

- The model occasionally hallucinates non-speech sounds. You will frequently find artifacts generated during silent parts of the audio track.

- Community documentation for fine-tuning is currently scarce. Compare that to older models like RVC which have extensive tutorials.

- Licensing restrictions attached to Microsoft’s research models often prohibit commercial monetization.

Who Should Use VibeVoice-1.5B?

- Localization Engineering Teams: Engineers translating game dialog will appreciate the cross-lingual synthesis. The model keeps the original voice actor’s timbre consistent across different regional releases.

- AI Voice Researchers: Academic and corporate researchers get direct access to a 1.5 billion parameter model. They can study contextual prosody and vibe-based control natively.

- Solo Content Creators: This model is a terrible fit for individual creators. The hardware requirements and lack of a consumer graphic interface make it completely unusable for non-developers.

VibeVoice-1.5B Pricing and Plans

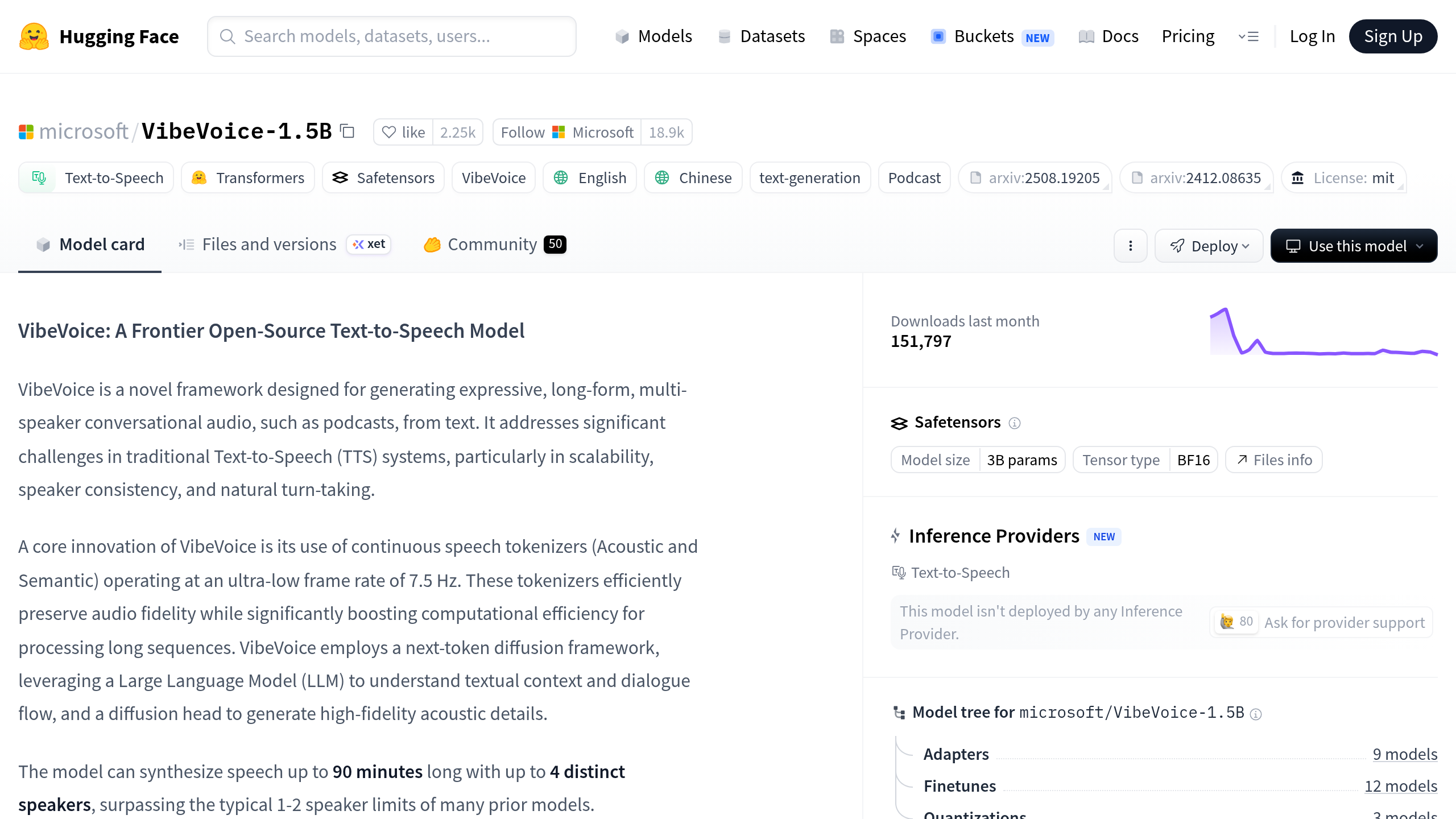

VibeVoice-1.5B does not operate on a traditional software subscription model. Microsoft released the model weights openly on Hugging Face. The software itself is free to download and run. That said, the actual cost of operation depends entirely on your compute environment. Running a 1.5 billion parameter model in production requires expensive enterprise hardware.

If you host this on AWS or RunPod, you must pay for high-end instances. These compute instances typically cost between $2.00 and $4.00 per hour. The short version: you save on software licensing but pay heavily for cloud compute. You must also check the Microsoft research license carefully before deploying this in a public tool.

How VibeVoice-1.5B Compares to Alternatives

ElevenLabs dominates the commercial voice cloning market. ElevenLabs offers a managed API and a highly polished web interface. You pay a monthly fee and make API calls without managing any infrastructure. VibeVoice-1.5B requires you to provision your own GPUs and write your own inference scripts. ElevenLabs is better for rapid commercial deployment, but VibeVoice-1.5B gives developers total access to the underlying model weights.

Meta Voicebox is another major research model in the generative audio space. Voicebox excels at tasks like noise removal and text-guided audio editing. VibeVoice-1.5B focuses much more heavily on speech-to-speech emotional transfer. Even so, both models share similar restrictive research licenses that limit how you can monetize their outputs.

The Right Pick for Enterprise Localization Engineers

VibeVoice-1.5B offers excellent capabilities for teams moving audio across languages. The model captures emotional performances and replicates them with high fidelity. Developers building complex localization pipelines will find immense value in the open model weights. The hardware demands and the sparse documentation make it too difficult for casual users to implement.

If you need high-quality voice cloning right now without managing servers, use ElevenLabs. ElevenLabs gives you a production-ready API on day one. Teams with heavy GPU resources and a need for strict acoustic control should download VibeVoice-1.5B and test it.