What is LAION?

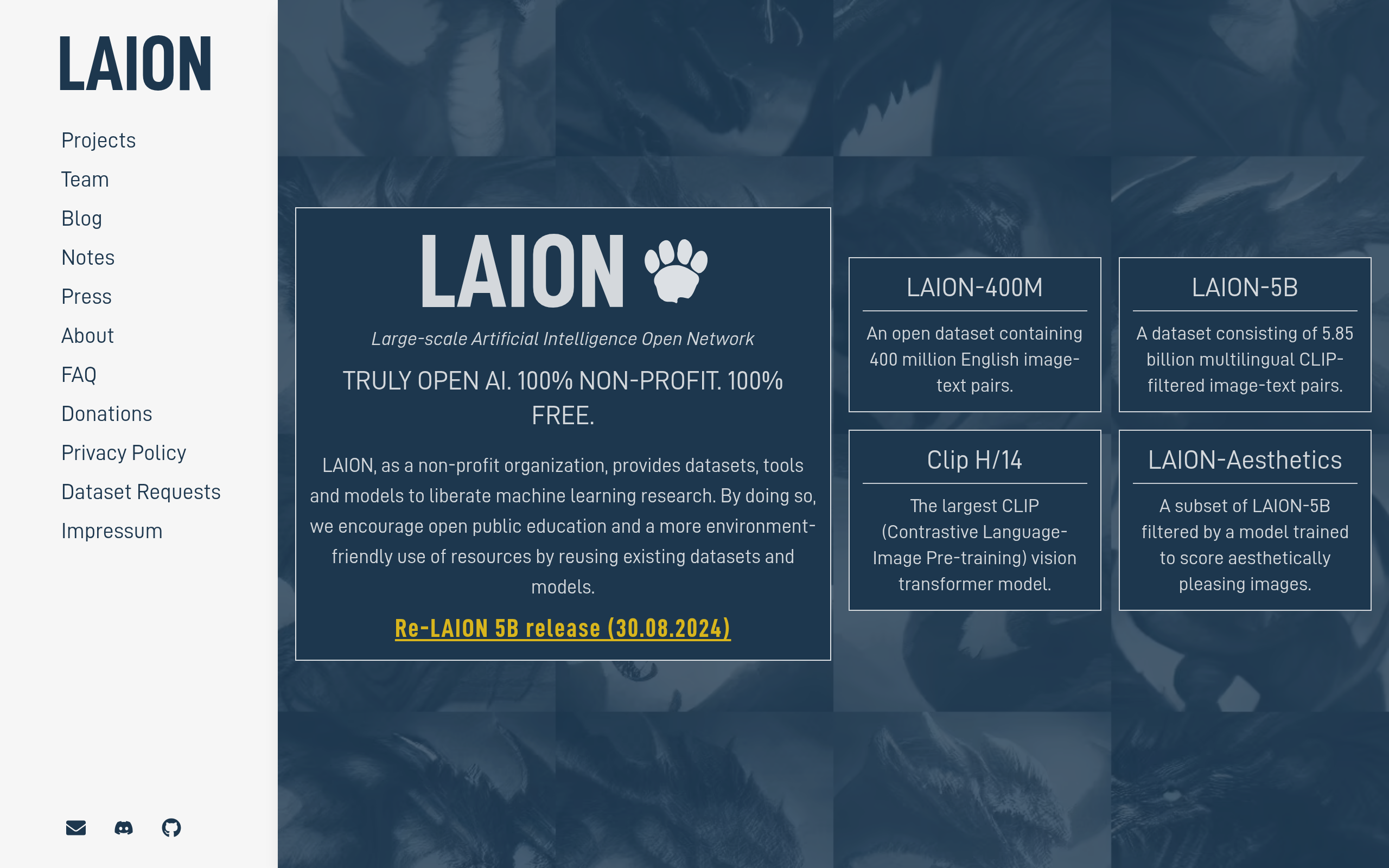

LAION is an open-source data collection project that provides massive datasets for training artificial intelligence models. Developed by the non-profit organization LAION e.V., this tool functions primarily as a repository of billions of image-text pairs. Researchers use these datasets to train text-to-image generators like Stable Diffusion.

The organization solves the problem of data scarcity for independent AI researchers. Before LAION released its 5.85 billion pair dataset, only massive tech companies had enough data to train large vision models. The primary audience includes academic researchers, machine learning engineers, and open-source AI developers.

- Primary Use Case: Training large-scale text-to-image models using billions of filtered image-text pairs.

- Ideal For: Machine learning researchers and academic institutions with high compute budgets.

- Pricing: Starts at $0 (Free open-source): Provides unlimited access to all datasets and models at no cost.

Key Features and How LAION Works

Massive Image-Text Datasets

- LAION-5B: Provides 5.85 billion CLIP-filtered image-text pairs for training vision models. The limit is that it only provides URLs, meaning users must download the actual images themselves.

- LAION-Aesthetics: Filters the main dataset for high visual quality using a trained linear estimator. The limit is that aesthetic scoring relies on subjective human ratings that may introduce cultural bias.

Audio and Language Resources

- LAION-Audio-630K: Contains 633,526 audio-text pairs for audio-language research. The limit is the relatively small size compared to the billions of images in their vision datasets.

- OpenAssistant: Offers a conversational AI dataset with over 161,000 human-generated interactions. The limit is that fine-tuning requires significant manual effort to format the data for specific model architectures.

Search and Retrieval Tools

- CLIP Retrieval: Allows users to search through billions of images using text or image queries. The limit is that the API can experience high latency during peak usage times.

- Dataset Safety Tools: Includes metadata for filtering explicit content and blurred faces. The limit is that automated filtering misses some explicit content due to the sheer volume of data.

LAION Pros and Cons

Pros

- Provides over 5 billion data points for free, allowing small labs to train models.

- Maintains high transparency by documenting all methodologies on GitHub for public audit.

- Offers the largest publicly available multi-modal dataset currently on the internet.

- Supports a highly active Discord community of over 20,000 researchers building open-source projects.

Cons

- Faces significant legal controversies regarding copyright and the inclusion of non-consensual imagery.

- Requires massive compute resources (often hundreds of GPUs) just to process the downloaded data.

- Suffers from link rot because the dataset only provides URLs instead of hosted images.

Who Should Use LAION?

- Academic Researchers: University labs use these datasets to study bias, safety, and vision-language performance. The open nature allows for peer-reviewed validation.

- Open-Source AI Developers: Teams building alternatives to proprietary models use LAION-5B as their foundational training data. It provides the scale necessary for competitive performance.

- Solo Hobbyists (Not Recommended): Independent developers without massive server budgets will struggle here. Processing billions of URLs requires enterprise-grade hardware and bandwidth.

Data processing costs change the equation entirely.

LAION Pricing and Plans

LAION operates entirely as a free resource. The organization does not charge for access to its datasets or models.

- Open Access ($0/mo): Grants unlimited access to LAION-5B, LAION-400M, OpenCLIP, and all research tools. This is a genuinely free tier, not a disguised trial. However, users must pay their own cloud computing costs to download and process the data.

How LAION Compares to Alternatives

Similar to Common Crawl, LAION scrapes the public web to build massive datasets. Common Crawl provides raw web page data, which is excellent for training large language models. Unlike Common Crawl, LAION specifically filters and pairs images with text using CLIP. This makes LAION strictly better for vision-language models, while Common Crawl wins for pure text generation.

Hugging Face operates as a model hosting platform rather than just a dataset creator. You can actually find LAION datasets hosted on Hugging Face. Hugging Face provides infrastructure to run models directly in the browser (which is great for quick testing). LAION only provides the raw data and code, leaving all execution to the user.

Verdict: The Ideal Resource for Well-Funded AI Labs

LAION provides unmatched value for institutional researchers and funded AI startups. If you need billions of image-text pairs to train a foundation model, this is your primary option. Solo developers should look elsewhere. If you just want to fine-tune an existing model, use Hugging Face instead of downloading raw LAION data.

The landscape of AI training data is shifting rapidly. Expect LAION to face stricter regulatory scrutiny over the next 12 months, likely forcing them to implement stricter opt-out mechanisms for copyrighted content.